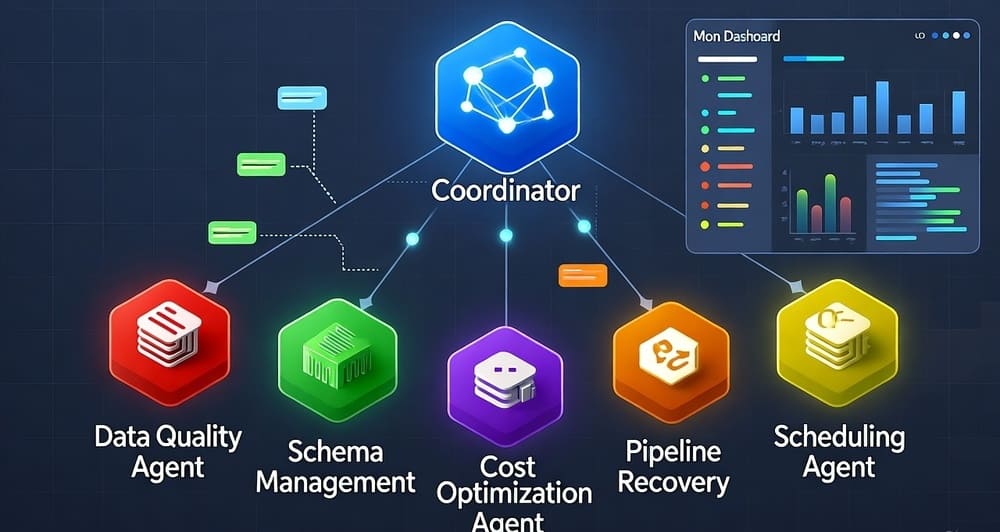

A hands-on guide to building MCP servers that connect Claude and LLMs to your databases, dbt catalogs, and Airflow pipelines. Complete Python code included.

Category: AI

Open Source LLMs for Enterprise: Llama 3, Mistral, and the Self-Hosting Reality Check

A practical guide to deploying open-source LLMs in production. GPU requirements, vLLM setup, cost comparison with GPT-4, and when self-hosting actually pays off.

Prompt Engineering for Data Pipelines: Using LLMs to Clean, Classify, and Enrich Data

A hands-on guide to integrating LLMs into ETL pipelines for data classification, cleaning, and enrichment with Python code, cost analysis, and fallback patterns.

LLM Inference Optimization: From 200ms to 50ms Per Token

A hands-on guide to cutting LLM inference latency 4x with quantization, KV cache tricks, vLLM, speculative decoding, and GPU selection.