Three months ago, our team started using Snowflake Cortex AI in production. Not as a proof of concept. Not in a sandbox. Real workloads, real data, real money on the line. We were already deep in the Snowflake ecosystem for our data warehouse, so the pitch was compelling: run LLM inference, vector search, and ML forecasting directly where our data lives, without moving anything to external services. After twelve weeks, I have a much clearer picture of where Snowflake Cortex AI genuinely delivers and where it quietly wastes your credits. This is the honest review I wish I had read before we started.

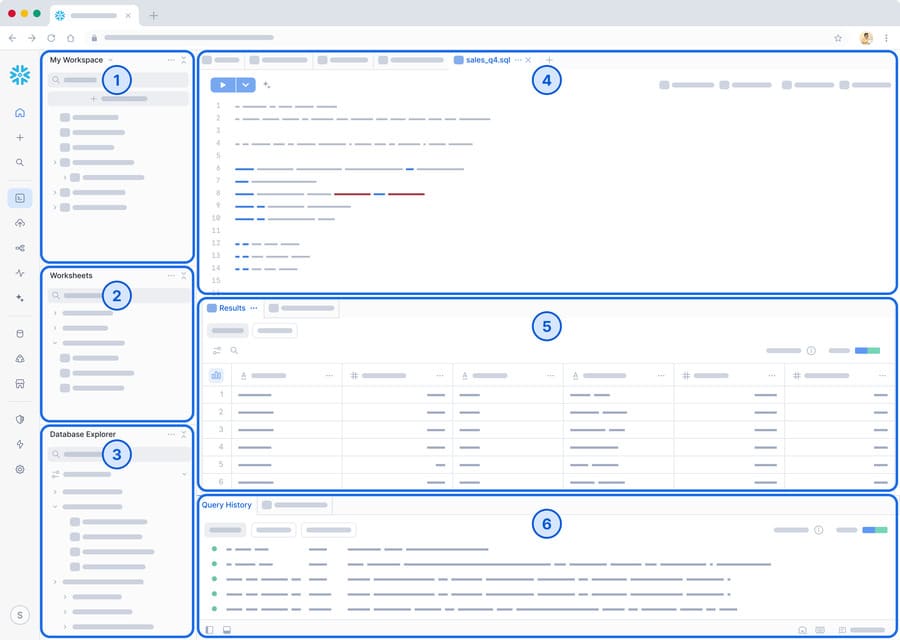

What Snowflake Cortex AI Actually Offers

Before diving into my experience, let me lay out what Cortex AI is and what it is not. Snowflake has been aggressively bundling AI features under the Cortex umbrella, and the marketing makes it sound like one unified product. In practice, it is four distinct feature groups with different maturity levels, different pricing models, and different levels of usefulness.

- Cortex LLM Functions - SQL-callable functions like COMPLETE, SUMMARIZE, TRANSLATE, EXTRACT_ANSWER, CLASSIFY, and SENTIMENT. These run foundation models (Mistral, Llama, Arctic) directly inside Snowflake.

- Cortex Search - A managed RAG (Retrieval-Augmented Generation) service. You create a search service on your data, and Snowflake handles embedding, indexing, and retrieval. No external vector database needed.

- Cortex Fine-Tuning - Fine-tune supported LLMs on your own data without leaving Snowflake. Currently supports Mistral 7B and Llama models.

- ML Functions - Built-in machine learning for time series forecasting, anomaly detection, and contribution explorer. These are not LLM-based; they are classical ML models that Snowflake trains and runs for you.

Each of these has a different billing mechanism, different limitations, and different levels of production readiness. Let me walk through each one based on what we actually shipped.

Cortex LLM Functions: SQL Meets Large Language Models

This is the headline feature, and I will admit it is genuinely satisfying the first time you run an LLM inference call as a SQL query. No API keys, no HTTP calls, no Python scripts stitching things together. Just SQL.

COMPLETE: The General-Purpose LLM Function

COMPLETE is the most flexible Cortex LLM function. You give it a model name, a prompt, and it returns generated text. We use it to generate product descriptions from structured catalog data for our e-commerce client.

-- Generate product descriptions from structured data

SELECT

product_id,

product_name,

SNOWFLAKE.CORTEX.COMPLETE(

'mistral-large2',

CONCAT(

'Write a concise 2-sentence product description for an e-commerce listing. ',

'Product: ', product_name, '. ',

'Category: ', category, '. ',

'Key features: ', features, '. ',

'Price range: ', price_tier, '.'

)

) AS generated_description

FROM catalog.products

WHERE description IS NULL

LIMIT 100;That works. It actually works well. The latency per row is roughly 1.5 to 4 seconds depending on the model and prompt length, which matters enormously when you are processing thousands of rows. We quickly learned to batch these into smaller runs rather than trying to process an entire table at once.

You can also use COMPLETE with structured options for more control:

-- COMPLETE with options: temperature, max_tokens, system prompt

SELECT

ticket_id,

SNOWFLAKE.CORTEX.COMPLETE(

'mistral-large2',

[

{'role': 'system', 'content': 'You are a support ticket classifier. Respond with only the category name.'},

{'role': 'user', 'content': description}

],

{'temperature': 0.1, 'max_tokens': 50}

):message::STRING AS category

FROM support.tickets

WHERE auto_category IS NULL;SUMMARIZE: Surprisingly Useful

SUMMARIZE takes a text column and produces a summary. We use it on customer feedback and support transcripts. The function is straightforward and the results are solid for most use cases.

-- Summarize long customer feedback entries

SELECT

feedback_id,

customer_id,

LENGTH(raw_feedback) AS original_length,

SNOWFLAKE.CORTEX.SUMMARIZE(raw_feedback) AS summary,

LENGTH(SNOWFLAKE.CORTEX.SUMMARIZE(raw_feedback)) AS summary_length

FROM customer.feedback

WHERE LENGTH(raw_feedback) > 1000

ORDER BY created_at DESC

LIMIT 50;One gotcha: SUMMARIZE does not let you control summary length or style. You get what you get. For cases where we needed bullet-point summaries or specific formats, we switched to COMPLETE with a custom prompt. SUMMARIZE is good for the 80% case where you just need a quick digest.

TRANSLATE: Works, With Caveats

Our dataset includes customer reviews in six languages. TRANSLATE handles the common European languages well but struggles with less common language pairs. Here is a straightforward example:

-- Translate customer reviews to English for unified analysis

SELECT

review_id,

original_language,

review_text,

SNOWFLAKE.CORTEX.TRANSLATE(review_text, original_language, 'en') AS english_text

FROM reviews.international

WHERE original_language != 'en'

AND translated_at IS NULL;Quality for French, German, and Spanish to English is comparable to DeepL. Japanese and Korean translations were noticeably worse, with awkward phrasing and occasional meaning drift. For those languages, we still route to an external translation API.

CLASSIFY and SENTIMENT: Quick Wins

CLASSIFY assigns a text to one of your provided categories. SENTIMENT returns a score from -1 to 1. Both are fast and cheap.

-- Classify support tickets into predefined categories

SELECT

ticket_id,

subject,

SNOWFLAKE.CORTEX.CLASSIFY_TEXT(

description,

['billing_issue', 'technical_bug', 'feature_request', 'account_access', 'general_inquiry']

):label::STRING AS predicted_category,

SNOWFLAKE.CORTEX.CLASSIFY_TEXT(

description,

['billing_issue', 'technical_bug', 'feature_request', 'account_access', 'general_inquiry']

):score::FLOAT AS confidence

FROM support.tickets

WHERE created_at >= DATEADD('day', -7, CURRENT_TIMESTAMP());

-- Sentiment analysis on product reviews

SELECT

product_id,

AVG(SNOWFLAKE.CORTEX.SENTIMENT(review_text)) AS avg_sentiment,

COUNT(*) AS review_count,

COUNT_IF(SNOWFLAKE.CORTEX.SENTIMENT(review_text) > 0.3) AS positive_count,

COUNT_IF(SNOWFLAKE.CORTEX.SENTIMENT(review_text) < -0.3) AS negative_count

FROM reviews.product_reviews

WHERE review_date >= '2025-12-01'

GROUP BY product_id

ORDER BY avg_sentiment ASC;SENTIMENT is the function I recommend most for teams just starting with Cortex. It is fast, the results are consistent, and it removes the need for external sentiment analysis libraries entirely. CLASSIFY is more variable. It works well with clearly distinct categories but gets confused when categories overlap semantically.

Cortex Search: RAG Without Leaving Snowflake

Cortex Search is Snowflake's answer to the "we need a vector database" problem. You create a search service, point it at a table, and it handles chunking, embedding, and retrieval. For teams that are already in Snowflake and want to build a RAG pipeline without managing Pinecone or Weaviate, this is attractive.

-- Create a Cortex Search service on your knowledge base

CREATE OR REPLACE CORTEX SEARCH SERVICE knowledge_base_search

ON knowledge_base.articles

WAREHOUSE = 'CORTEX_WH_S'

TARGET_LAG = '1 hour'

AS (

SELECT

article_id,

title,

content,

category,

last_updated

FROM knowledge_base.articles

WHERE status = 'published'

);Once the service is created, you query it from Python using the Snowflake Python connector:

import json

from snowflake.core import Root

# Connect to the search service

root = Root(session)

search_service = (

root.databases["KNOWLEDGE_BASE"]

.schemas["PUBLIC"]

.cortex_search_services["KNOWLEDGE_BASE_SEARCH"]

)

# Run a search query

results = search_service.search(

query="How do I reset my API credentials?",

columns=["article_id", "title", "content"],

filter={"@eq": {"category": "developer_docs"}},

limit=5

)

for result in results.results:

print(f"Score: {result['@search_score']:.3f} | {result['title']}")

print(f" {result['content'][:200]}...")

print()We built an internal knowledge base search for our documentation team using Cortex Search. Setup was genuinely faster than our previous approach with pgvector. The TARGET_LAG parameter controls how fresh the index stays, which is a nice touch. Set it to one hour and Snowflake automatically re-indexes when data changes.

The limitations are real though. You cannot bring your own embeddings. You cannot choose the embedding model. You cannot access the raw vectors for your own similarity calculations. If you need any of that, you need an external vector store. For straightforward search-and-retrieve workflows, Cortex Search is good enough. For anything more sophisticated, it is not.

Cortex Fine-Tuning: Promising but Early

We fine-tuned a Mistral 7B model on our support ticket classification task. The process is SQL-driven, which is consistent with the Cortex philosophy of keeping everything in SQL.

-- Prepare training data

CREATE OR REPLACE TABLE ml.training_data AS

SELECT

description AS prompt,

verified_category AS completion

FROM support.tickets_labeled

WHERE labeled_by = 'human'

AND label_confidence >= 0.95

SAMPLE (10000 ROWS);

-- Launch fine-tuning job

SELECT SNOWFLAKE.CORTEX.FINETUNE(

'CREATE',

'mistral-7b',

'TRAINING_DB.ML.TRAINING_DATA',

'VALIDATION_DB.ML.VALIDATION_DATA',

{'learning_rate': 1e-5, 'num_epochs': 3}

) AS job_id;

-- Check job status

SELECT SNOWFLAKE.CORTEX.FINETUNE('SHOW') AS jobs;The fine-tuned model improved our classification accuracy from 71% with the base model to 89%. That is a meaningful lift. However, the training took about 6 hours on our 10,000-example dataset, and the cost was approximately $180 in Cortex credits. That is not unreasonable for a one-time training run, but iterating on hyperparameters gets expensive fast.

The biggest limitation is model selection. You are restricted to the models Snowflake supports for fine-tuning. You cannot fine-tune GPT-4, Claude, or Gemini through Cortex. If you want to fine-tune those, you still need to go through their respective platforms.

ML Functions: The Underrated Gem

While everyone focuses on the LLM features, I think Snowflake's ML Functions are actually the most production-ready part of Cortex AI. These are not LLM-based. They use classical ML algorithms for three specific tasks: time series forecasting, anomaly detection, and contribution analysis. And they are excellent.

Forecasting

We replaced a custom Prophet-based forecasting pipeline with Snowflake's built-in FORECAST function. The setup time went from two weeks of engineering to about thirty minutes.

-- Create a forecasting model for daily revenue

CREATE OR REPLACE SNOWFLAKE.ML.FORECAST revenue_forecast(

INPUT_DATA => SYSTEM$REFERENCE('TABLE', 'analytics.daily_revenue'),

SERIES_COLNAME => 'business_unit',

TIMESTAMP_COLNAME => 'date',

TARGET_COLNAME => 'revenue',

CONFIG_OBJECT => {'prediction_interval': 0.95}

);

-- Generate 30-day forecast

CALL revenue_forecast!FORECAST(

FORECASTING_PERIODS => 30,

CONFIG_OBJECT => {'prediction_interval': 0.95}

);

-- Query the results

SELECT

series,

ts AS forecast_date,

forecast,

lower_bound,

upper_bound

FROM TABLE(RESULT_SCAN(LAST_QUERY_ID()))

ORDER BY series, ts;The accuracy was within 3% of our Prophet model for 7-day forecasts and actually slightly better for 30-day forecasts. The model handles multiple time series (we have 12 business units) automatically via the SERIES_COLNAME parameter. No manual loop needed.

Anomaly Detection

Anomaly detection follows the same pattern. Create a model, train it on historical data, and then use it to flag outliers.

-- Create anomaly detection model on pipeline metrics

CREATE OR REPLACE SNOWFLAKE.ML.ANOMALY_DETECTION pipeline_anomaly_detector(

INPUT_DATA => SYSTEM$REFERENCE('TABLE', 'monitoring.pipeline_metrics'),

SERIES_COLNAME => 'pipeline_name',

TIMESTAMP_COLNAME => 'metric_timestamp',

TARGET_COLNAME => 'rows_processed',

LABEL_COLNAME => '' -- unsupervised

);

-- Detect anomalies in the last 24 hours

CALL pipeline_anomaly_detector!DETECT_ANOMALIES(

INPUT_DATA => SYSTEM$REFERENCE('TABLE', 'monitoring.pipeline_metrics_latest'),

SERIES_COLNAME => 'pipeline_name',

TIMESTAMP_COLNAME => 'metric_timestamp',

TARGET_COLNAME => 'rows_processed',

CONFIG_OBJECT => {'prediction_interval': 0.99}

);

-- Alert on detected anomalies

SELECT

series AS pipeline_name,

ts AS detected_at,

y AS actual_value,

forecast AS expected_value,

CASE WHEN is_anomaly THEN 'ANOMALY' ELSE 'NORMAL' END AS status

FROM TABLE(RESULT_SCAN(LAST_QUERY_ID()))

WHERE is_anomaly = TRUE

ORDER BY ts DESC;We feed the anomaly results into a Snowflake alert that sends Slack notifications. The entire pipeline runs inside Snowflake with zero external infrastructure. That is the real value proposition here: not that the ML is better than what you could build yourself, but that the operational overhead vanishes.

Contribution Explorer

Contribution Explorer is the least talked about ML function, but it has saved us hours of manual investigation. When a metric changes unexpectedly, it tells you which dimensions contributed most to the change.

-- Why did revenue drop last week?

CREATE OR REPLACE SNOWFLAKE.ML.CONTRIBUTION_EXPLORER revenue_change_analysis(

INPUT_DATA => SYSTEM$REFERENCE('TABLE', 'analytics.daily_revenue_detailed'),

LABEL_COLNAME => 'period', -- 'baseline' vs 'comparison'

METRIC_COLNAME => 'revenue'

);

CALL revenue_change_analysis!EXPLAIN();

-- View the top contributors to the change

SELECT

contributor,

contribution_score,

baseline_avg,

comparison_avg,

relative_change_pct

FROM TABLE(RESULT_SCAN(LAST_QUERY_ID()))

ORDER BY ABS(contribution_score) DESC

LIMIT 10;Last month, we had an unexplained 18% revenue drop. Contribution Explorer identified in under 60 seconds that it was driven by a single product category in two regions, caused by a pricing configuration error. Manually, that would have taken a data analyst half a day of slicing and dicing dashboards.

Cost Analysis: Cortex Credits vs External APIs

This is the section most people want to read, so let me be direct. Snowflake Cortex AI is not cheap. But the comparison is nuanced.

Cortex LLM functions are billed in Cortex credits, which are separate from your regular compute credits. As of early 2026, the pricing works out roughly like this:

| Operation | Cortex AI Cost (approx) | External Alternative (approx) | Verdict |

|---|---|---|---|

| COMPLETE (Mistral Large, 1K tokens) | ~$0.008 | OpenAI GPT-4o: ~$0.005 | Cortex is 60% more expensive |

| COMPLETE (Llama 3.1 70B, 1K tokens) | ~$0.004 | Together.ai Llama 70B: ~$0.002 | Cortex is ~2x more expensive |

| SUMMARIZE (per document) | ~$0.01 | OpenAI with prompt: ~$0.006 | Cortex slightly more |

| SENTIMENT (per text) | ~$0.001 | AWS Comprehend: ~$0.0001 | Cortex is 10x more expensive |

| TRANSLATE (per 1K chars) | ~$0.005 | DeepL API: ~$0.002 | Cortex 2-3x more expensive |

| Cortex Search (per month, small) | ~$400-800 | Pinecone Starter: ~$70 | Cortex much more expensive |

| ML Forecast (per model) | ~$2-5 per training | Prophet on EC2: ~$0.50 | Cortex more expensive but zero ops |

On pure unit cost, Cortex loses almost every comparison. So why did we keep using it? Three reasons:

- No data movement. Sending 50 million customer records to an external API means egress costs, security reviews, and compliance headaches. Keeping everything in Snowflake eliminated about $2,000/month in data transfer costs and a six-week security review process.

- No infrastructure. We did not need to build, deploy, or monitor a separate inference service. The engineering time savings were roughly 2 FTE-weeks per quarter.

- Governance. Every Cortex call is logged in Snowflake's query history. We can audit exactly who ran what LLM function on which data. Try getting that from a standalone API integration.

Our monthly Cortex spend settled at approximately $3,200. The equivalent workload through external APIs would cost about $1,400 in raw API fees, plus approximately $800 in infrastructure and data transfer. So Cortex is roughly 50% more expensive in total cost of ownership, but with dramatically less operational complexity. Whether that trade-off is worth it depends entirely on your team's capacity and priorities.

Limitations and Gotchas: What Nobody Tells You

Three months of production use surfaced a number of issues that are not in the documentation. Here is what caught us off guard.

Latency Is Unpredictable

COMPLETE calls to the same model with the same prompt length can vary from 1.2 seconds to 9 seconds. There is no SLA on Cortex LLM latency. We had a dashboard that called SENTIMENT on incoming support tickets in near-real-time, and the latency spikes made it unusable. We moved that to a scheduled task that runs every 15 minutes instead.

Row Limits on LLM Functions

Do not try to run COMPLETE on a million-row table. Snowflake will throttle you aggressively. We found that batches of 500 to 1,000 rows work best. Anything larger and you start getting timeout errors or the query runs for hours. We built a simple batching pattern:

-- Batch processing pattern for Cortex LLM functions

-- Process in chunks of 500 using a control table

CREATE OR REPLACE TABLE processing.cortex_batch_control AS

SELECT

ROW_NUMBER() OVER (ORDER BY id) AS row_num,

id,

text_column

FROM source_table

WHERE needs_processing = TRUE;

-- Process batch N (run in a loop via task or external orchestrator)

SET batch_size = 500;

SET batch_number = 1; -- increment this

INSERT INTO results_table (id, llm_output)

SELECT

id,

SNOWFLAKE.CORTEX.COMPLETE('mistral-large2', text_column) AS llm_output

FROM processing.cortex_batch_control

WHERE row_num BETWEEN (($batch_number - 1) * $batch_size + 1)

AND ($batch_number * $batch_size);Model Availability Is Limited

You cannot use GPT-4, Claude, or Gemini through Cortex. You are limited to Snowflake Arctic, Mistral models, Llama models, and a few others. For many tasks, Mistral Large is good enough. For complex reasoning or nuanced text generation, the quality gap with GPT-4o or Claude 3.5 is noticeable. We use Cortex for bulk processing tasks where good-enough is fine, and external APIs for high-stakes generations.

Cortex Search Cannot Do Hybrid Search

Cortex Search does vector similarity search. It does not support combining vector search with keyword search (hybrid search), which is what most production RAG systems end up needing. If you need BM25 plus vector similarity with a reranker, you are still going to need an external solution.

No Streaming Support

COMPLETE returns the entire response at once. There is no streaming option. If you are building a chatbot or any interactive application that benefits from token-by-token streaming, Cortex is not the right choice. We built our customer-facing chatbot using direct API calls to Anthropic and only use Cortex for backend batch processing.

Region Restrictions

Not all Cortex features are available in all Snowflake regions. We are on AWS us-east-1 and had full access, but a colleague's team on Azure West Europe was missing several models and the fine-tuning feature entirely. Check the documentation for your region before committing to a Cortex-dependent architecture.

Cortex AI vs Doing It Yourself: An Honest Comparison

For context, here is what our pre-Cortex architecture looked like for the same workloads:

Snowflake (data) --> Airflow DAG --> Python script --> OpenAI API --> Write results back to Snowflake. Total components: 4 services, 2 API keys, 1 orchestrator, 3 monitoring dashboards, and a partridge in a pear tree.

With Cortex:

Snowflake (data) --> SQL query with Cortex function --> Results in same table. Total components: 1 service, 0 API keys, 0 external orchestrators.

That simplification is real and it matters. Every external service is a point of failure, a secret to rotate, a version to upgrade, and a vendor to negotiate with. Cortex eliminates all of that for workloads that fit within its capabilities.

But the capabilities have boundaries. Here is my decision framework after three months:

| Use Case | Use Cortex | Use External |

|---|---|---|

| Bulk sentiment analysis | Yes | |

| Text classification (clear categories) | Yes | |

| Summarization (batch) | Yes | |

| Translation (major languages) | Yes | |

| Time series forecasting | Absolutely | |

| Anomaly detection | Absolutely | |

| Simple RAG / knowledge base search | Yes, if basic | |

| Customer-facing chatbot | Yes (need streaming) | |

| Complex reasoning tasks | Yes (GPT-4o/Claude) | |

| Hybrid search (vector + keyword) | Yes (Elasticsearch, Vespa) | |

| Translation (CJK languages) | Yes (DeepL) | |

| Real-time inference (<500ms) | Yes (dedicated endpoint) | |

| Custom embedding models | Yes (bring your own) |

The Verdict: Where Snowflake Cortex AI Shines

After three months of production use, my overall assessment of Snowflake Cortex AI is cautiously positive. It is not a replacement for a dedicated ML platform. It is not going to obsolete your OpenAI or Anthropic API keys. But it fills a very specific and genuinely useful niche: bringing AI capabilities to data teams who are already in Snowflake and want to augment their data pipelines without building external infrastructure.

The ML functions (forecasting, anomaly detection, contribution explorer) are the standout. They are production-ready, reasonably priced for the zero-ops convenience, and genuinely save engineering time. I would recommend these to any Snowflake customer without hesitation.

The LLM functions are useful for batch processing where you need good-enough quality on large volumes of data and the operational simplicity outweighs the cost premium. They are not suitable for latency-sensitive or quality-critical applications.

Cortex Search is good for simple internal search applications but lacks the flexibility of dedicated vector databases. Fine-tuning is promising but limited in model selection and expensive to iterate on.

The biggest risk I see is lock-in. Every Cortex function is Snowflake-proprietary SQL. If you build your entire ML pipeline on Cortex and later need to move to Databricks or BigQuery, you are rewriting everything. For some teams, that is an acceptable trade-off. For others, it is a dealbreaker.

My recommendation: start with the ML functions and SENTIMENT. Those deliver the most value with the least downside. Evaluate the LLM functions for batch workloads where data governance matters more than per-token cost. Skip Cortex Search unless your search requirements are genuinely simple. And keep your external API integrations for anything customer-facing or quality-critical.

Snowflake Cortex AI is not the future of ML engineering. But it is a genuinely useful addition to the Snowflake toolkit, and after three months, it has earned a permanent place in our stack for the right workloads.

Leave a Comment