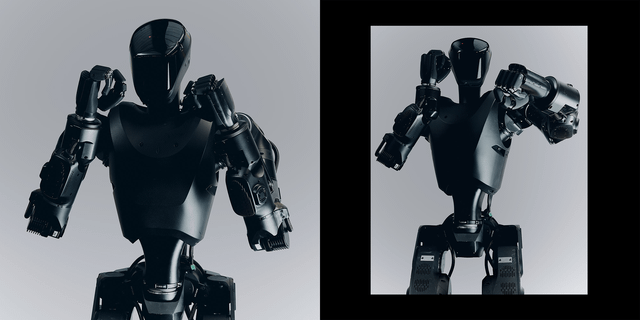

In a warehouse lab, engineers are debugging a walking sensor array designed for urban combat. Foundation's Phantom MK-1, a bipedal robot undergoing field evaluation in Ukraine, represents a profound shift in military data systems. To its developers, it's not a 'Terminator' but a complex platform for perception, navigation, and targeting algorithms, pitched to Pentagon officials as a tool for high-risk reconnaissance and structural entry.

The core argument from its creators is one of operational efficiency and risk mitigation: autonomous systems don't fatigue, and their deployment could reduce human casualties. However, for data and machine learning specialists, the alarm bells are less about science fiction and more about deploying fundamentally unstable models into consequential environments.

The concern is that we are integrating perception and decision-making software—notorious for edge-case failures and unexpected behaviors—into armed hardware. This creates a lethal feedback loop with no established audit trail. The technical challenge of ensuring predictable, accountable performance in chaotic real-world conditions remains unsolved, even as the platforms themselves advance.

From an engineering perspective, the transition appears irreversible. Major states are investing not because robots are flawless, but because the strategic calculus has changed. The capability to gather data and apply force without direct human exposure is now a primary engineering directive. The debate is no longer about if such systems will be built, but about what constraints and validation frameworks—if any—will govern their deployment.

Source: Reddit AI