A new lawsuit presents what appears to be the first law enforcement-documented case of illegal child sexual abuse material (CSAM) generated by Elon Musk's Grok AI. The complaint, filed Monday in U.S. district court, centers on three girls from Tennessee whose real photographs were allegedly transformed by the system.

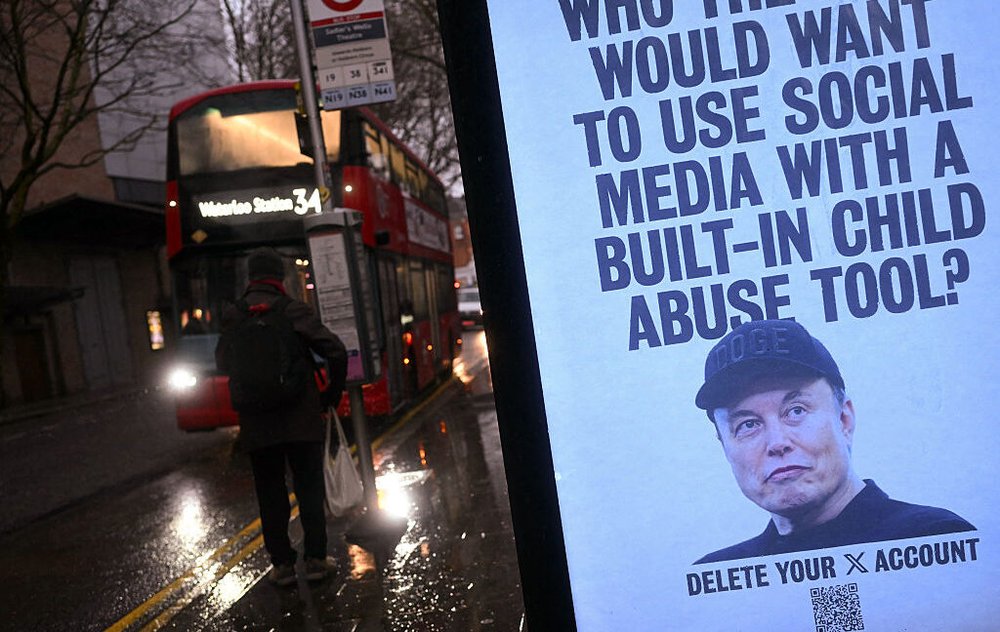

The case originated from a tip on Discord, which led authorities to the material. This development directly challenges Musk's repeated public denials. As recently as January, he stated he had seen "literally zero" such images from Grok, despite a Wired report detailing researcher findings that nearly 10% of outputs from the standalone 'Grok Imagine' app could be classified as CSAM.

Earlier this year, instead of implementing stricter filters, xAI restricted Grok's image generation to paying subscribers. Researchers from the Center for Countering Digital Hate had previously estimated the system produced tens of thousands of sexualized images depicting children.

The lawsuit accuses Musk and xAI of intentionally designing a product to "profit off the sexual predation of real people, including children." It estimates "at least thousands of minors" have been victimized. The plaintiffs are seeking a court order to halt these outputs and are demanding compensatory and punitive damages for all affected minors.

Source: Ars Technica