- Meta’s Ranking Engineer Agent (REA) autonomously executes key steps across the end-to-end machine learning (ML) lifecycle for ads ranking models.

- This post covers REA’s ML experimentation capabilities: autonomously generating hypotheses, launching training jobs, debugging failures, and iterating on results. Future posts will cover additional REA capabilities.

- REA reduces the need for manual intervention. It manages asynchronous workflows spanning days to weeks through a hibernate-and-wake mechanism, with human oversight at key strategic decision points.

- In its first production rollout, REA delivered:

- 2x Model Accuracy: REA-driven iterations doubled average model accuracy over baseline across six models.

- 5x Engineering Output: With REA-driven iteration, three engineers delivered proposals to launch improvements for eight models — work that historically required two engineers per model.

The Bottleneck in Traditional ML Experimentation

Meta’s advertising system delivers personalized experiences to billions of people across Facebook, Instagram, Messenger, and WhatsApp. Powering these interactions are highly sophisticated, complex and massively distributed machine learning (ML) models that continuously evolve to serve both advertisers and people who use the platforms.

Optimizing these ML models has traditionally been time-consuming. Engineers craft hypotheses, design experiments, launch training runs, debug failures across complex codebases, analyze results and iterate. Each full cycle can span days to weeks. As Meta’s models have matured over the years, finding meaningful improvements has become increasingly challenging. The manual, sequential nature of traditional ML experimentation has become a bottleneck to innovation.

To address this, Meta built the Ranking Engineer Agent, an autonomous AI agent designed to drive the end-to-end ML lifecycle and iteratively evolve Meta’s ads ranking models at scale.

Introducing REA: A New Kind of Autonomous Agent

Many AI tools used in ML workflows today function as assistants: They are reactive, task-scoped and session-bound. They can help with individual steps (for example, drafting a hypothesis, writing configuration files, interpreting logs), but they typically cannot run an experiment end to end. An engineer still has to decide what to do next, re-establish context, and drive progress across long-running jobs — and debug inevitable failures.

REA is different: an autonomous agent built to drive the end-to-end ML lifecycle, coordinating and advancing ML experiments across multiday workflows with minimal human intervention.

REA addresses three core challenges in autonomous ML experimentation:

- Long-Horizon, Asynchronous Workflow Autonomy: ML training jobs run for hours or days, far beyond what any session-bound assistant can manage. REA maintains persistent state and memory across multiround workflows spanning days or weeks, staying coordinated without continuous human supervision.

- High-Quality, Diverse Hypothesis Generation: Experiment quality is only as good as the hypothesis that drives it. REA synthesizes outcomes from historical experiments and frontier ML research to surface configurations unlikely to emerge from any single approach, and it improves with every iteration.

- Resilient Operation Within Real-World Constraints: Infrastructure failures, unexpected errors and compute budgets can’t halt an autonomous agent. REA adapts within predefined guardrails, keeping workflows moving without escalating routine failures to humans.

REA addresses these challenges through a Hibernate-and-Wake Mechanism for continuous multiweek operation, a Dual-Source Hypothesis Engine that combines a historical insights database with a deep ML research agent, and a Three-Phase Planning Framework (Validation → Combination → Exploitation) that operates within engineer-approved compute budgets.

How REA Manages Multi-Day ML Workflows Autonomously

REA is built around a core insight: Complex ML optimization isn’t a single task. It is a multistage process that unfolds over days or weeks. The agent must reason, plan, adapt and persist across this entire horizon.

Long-Horizon Workflow Autonomy

Traditional AI assistants operate in short bursts, responding to prompts and then waiting for the next query. ML experimentation doesn’t work that way. Training jobs run for hours or days, and the agent must remain coordinated across these extended timelines.

REA uses a hibernate-and-wake mechanism. When the agent launches a training job, it delegates the wait to a background system, shuts down to conserve resources, and automatically resumes where it left off when the job completes. This enables efficient, continuous operation across extended time frames without requiring constant human monitoring.

To support this, Meta built REA on an internal AI agent framework, Confucius, designed for complex, multistep reasoning tasks. It provides strong code generation capabilities and a flexible SDK for integrating with Meta’s internal tooling systems, including job schedulers, experiment tracking infrastructure and codebase navigation tools.

High-Quality, Diverse Hypothesis Generation

The quality of the hypothesis directly determines the quality of an ML experiment. REA consults two specialized systems to generate diverse, high-quality ideas:

- Historical Insights Database: A curated repository of past experiments that enables in-context learning and pattern recognition across prior successes and failures.

- ML Research Agent: A deep research component that investigates baseline model configurations and proposes novel optimization strategies, using Meta’s historical insights database.

By synthesizing insights from both sources, REA surfaces configurations unlikely to emerge from any single approach in isolation. REA’s most impactful improvements have combined architectural optimizations with training-efficiency techniques — a result of this cross-system methodology.

Resilient Execution Within Real-World Constraints

Real-world experimentation operates under compute constraints and inevitable failures. REA addresses both through structured planning and autonomous adaptation.

Before executing any plan, REA proposes a detailed exploration strategy, estimates total GPU compute cost, and confirms the approach with an engineer. A typical multiphase plan proceeds through three stages:

- Validation: Individual hypotheses from different sources are tested in parallel to establish quality baselines.

- Combination: Promising hypotheses are combined to search for synergistic improvements.

- Exploitation (Intensive Optimization): The most promising candidates are explored aggressively to maximize results within the approved compute budget.

When REA encounters failures — such as infrastructure issues, unexpected errors, or suboptimal results — it adjusts the plan within predefined guardrails instead of waiting for human intervention. It consults a runbook of common failure patterns, makes prioritization decisions (such as excluding jobs with clear out-of-memory errors or training instability signals such as loss explosions), and debugs preliminary infrastructure failures from first principles. This resilience is critical for maintaining autonomy over long-horizon tasks, where engineers provide periodic oversight rather than continuous monitoring.

REA operates with rigorous safeguards. It works exclusively on Meta’s ads ranking model codebase. Engineers grant explicit access controls through preflight checklist reviews, and REA confirms compute budgets up front, halting or pausing runs when thresholds are reached.

The REA System Architecture

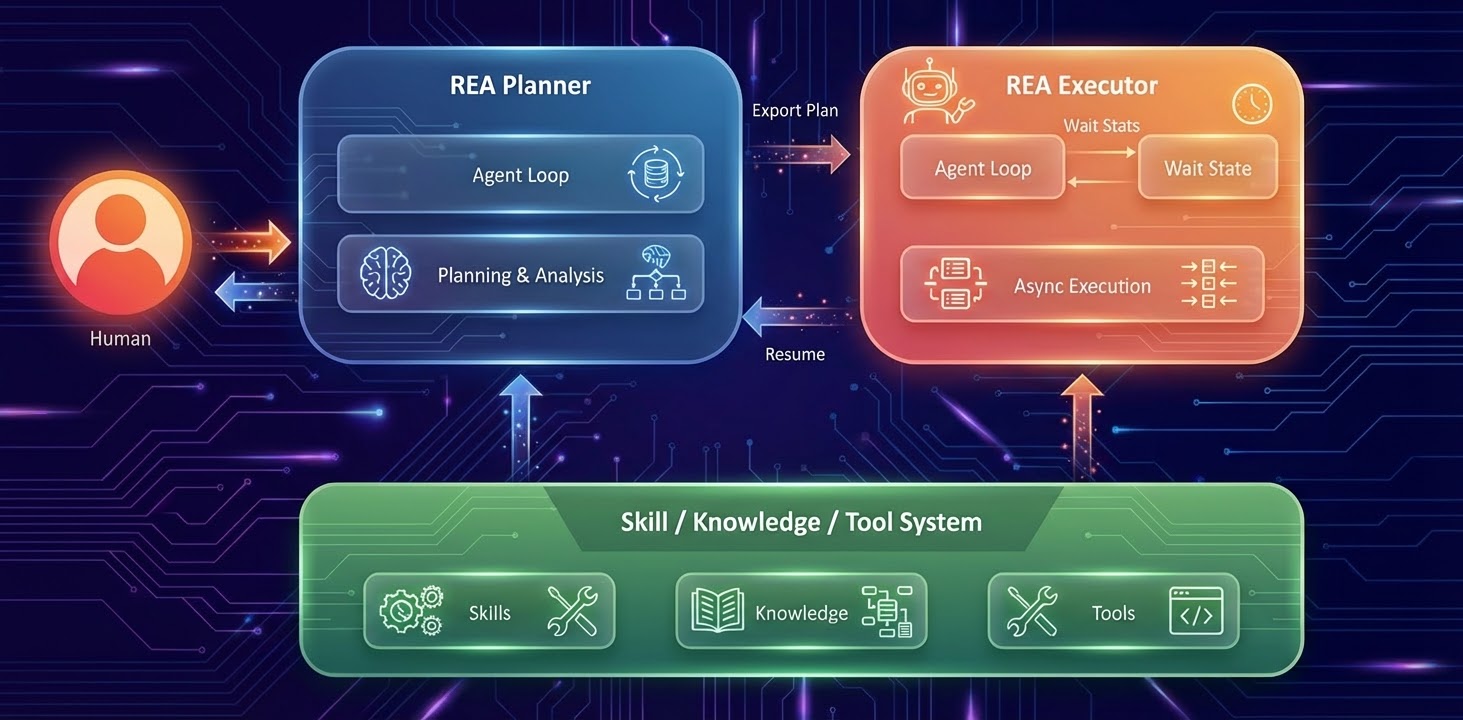

The Ranking Engineer Agent is built on two interconnected components, REA Planner and REA Executor, supported by a shared Skill, Knowledge and Tool System that provides ML capabilities, historical experiment data, and integrations with Meta’s internal infrastructure. Together, they directly enable the agent’s three core capabilities.

Long-Horizon Autonomy is powered by the execution flow: An engineer collaborates with the hypothesis generator to create a detailed experiment plan through the REA Planner. That plan is exported to the REA Executor, which manages asynchronous job execution through an agent loop and wait state, entering a wait state during training runs and resuming with results upon completion rather than requiring continuous human monitoring across multiweek workflows.

High-Quality, Diverse Hypothesis Generation is driven by the knowledge flow: As the executor completes experiments, a dedicated experiment logger records outcomes, key metrics, and configurations into a centralized hypothesis experiment insight database. This persistent memory accumulates knowledge across the full history of the agent’s operation. The hypothesis generator draws on these insights to identify patterns, learn from prior successes and failures, and propose increasingly sophisticated hypotheses for each subsequent round, closing the loop and compounding the system’s intelligence over time.

Resilient Execution is maintained across both flows: When the executor encounters failures, infrastructure errors, out-of-memory signals, or training instability, it consults a runbook of common failure patterns and applies prioritization logic to adapt autonomously within predefined guardrails. It then resumes the planner with actionable results rather than surfacing routine interruptions to engineers.

Impact: Model Accuracy and Engineering Productivity

2x Model Accuracy Over Baseline Approaches

In the first production validation across a set of six models, REA-driven iterations doubled average model accuracy over baseline approaches. This translates directly to stronger advertiser outcomes and better experiences on Meta platforms.

5x Engineering Productivity Gains

REA amplifies impact by automating the mechanics of ML experimentation, enabling engineers to focus on creative problem-solving and strategic thinking. Complex architectural improvements that previously required multiple engineers over several weeks can now be completed by smaller teams in days.

Early adopters using REA increased their model-improvement proposals from one to five in the same time frame. Work that once took two engineers per model now takes three engineers across eight models.

The Future of Human-AI Collaboration in ML Engineering

REA represents a shift in how Meta approaches ML engineering. By building agents that can autonomously manage the entire experimentation lifecycle, the team is changing the structure of ML development — moving engineers from hands-on experiment execution toward strategic oversight, hypothesis direction, and architectural decision-making.

This new paradigm, where agents handle iterative mechanics while humans make strategic decisions and final approvals, is just the beginning. Privacy, security, and governance remain key priorities for the agent. Meta continues to enhance REA’s capabilities by fine-tuning specialized models for hypothesis generation, expanding analysis tools, and extending the approach to new domains.

Acknowledgements

Ashwin Kumar, Chaorong Chen, Deepak Chandra, Fan Yang, Hangjun Xu, Harpal Bassali, Jakob Moberg, Jags Somadder, Jingyi Guan, Jp Owed, Junhua Gu, Liquan Huang, Matt Steiner, Peter Chu, Qinjin Jia, Ritwik Tewari, Sandeep Pandey, Santanu Kolay, Shweta Memane, Vitor Cid, Wenlin Chen, Xiaoyu Deng, Xin Zhao, Zach Freeman, Zhaodong Wang, Zoe Zu

The post Ranking Engineer Agent (REA): The Autonomous AI Agent Accelerating Meta’s Ads Ranking Innovation appeared first on Engineering at Meta.