Oracle Database for Data Engineers: Partitioning, Parallelism, and Fast Analytics

Why this matters

You inherit a warehouse that crawls at month-end. Queries spike to hours, ETL windows bust, and dashboards time out. Oracle can absolutely fly—if you lean on the features built for scale: partitioning, parallelism, materialized views, and smart loading patterns. This guide shows what to use, when, and how.

Architecture essentials (data-engineering view)

- Storage engine & segments: Tables and indexes live as segments inside tablespaces. Your tuning lever is how you lay out segments (partitioning, compression, indexing).

- Optimizer: Oracle’s cost-based optimizer (CBO) chooses plans using stats. If stats are stale, performance dies. Automate stats and verify histograms/skew.

- Parallel execution: Operators (scan, join, aggregation, DML) can run in parallel across processes. Use it to shrink batch windows.

- Redo/undo: Durability via redo logs; consistent reads via undo. Bulk operations must respect these—hence special modes (DIRECT=TRUE, NOLOGGING, etc.).

- Optional engines:

- Partitioning option: table/index partitioning, partition-wise joins, EXCHANGE PARTITION.

- In-Memory option: columnar copies in memory for star-joins/analytics.

- Advanced Compression / HCC (Exadata): compress cold segments at rest.

Core patterns you’ll actually use

1) Range partitioning for time-based data

When: Fact tables by event_date, rolling retention, fast pruning, cheap deletes.

CREATE TABLE fact_orders (

order_id NUMBER,

customer_id NUMBER,

amount NUMBER(12,2),

event_date DATE

)

PARTITION BY RANGE (event_date) (

PARTITION p2025m10 VALUES LESS THAN (DATE '2025-11-01'),

PARTITION p2025m11 VALUES LESS THAN (DATE '2025-12-01'),

PARTITION pmax VALUES LESS THAN (MAXVALUE)

);

Why: Prunes partitions by date so scans stay small; enables partition-wise joins if dimensions are co-partitioned.

Pitfalls: Missing future partitions causes inserts to fail. Automate partition creation.

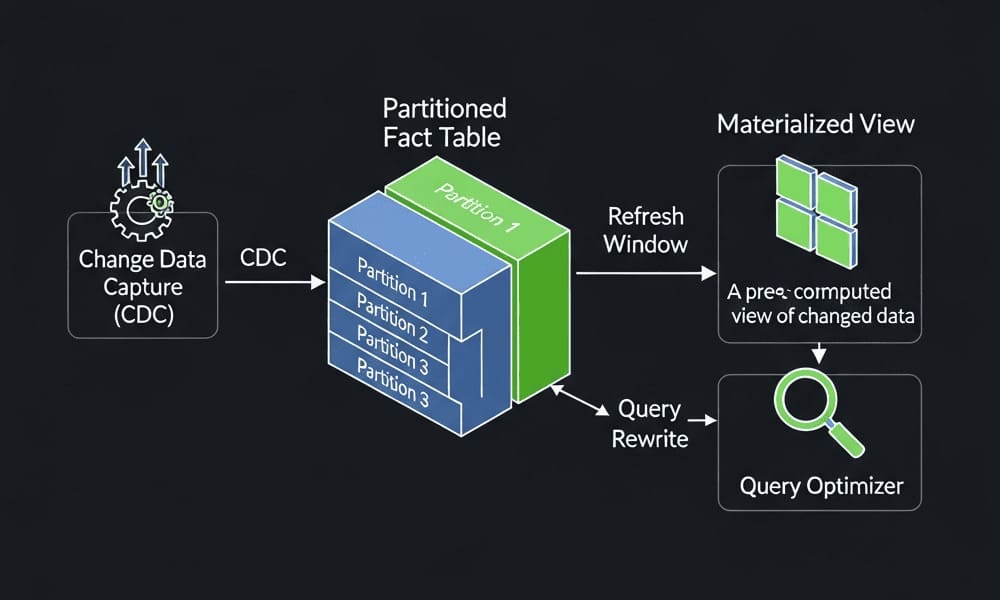

2) Partition exchange load (near-zero downtime swaps)

Load into a staging table with the same structure, then swap it in atomically.

-- Staging table for Nov 2025 data

CREATE TABLE fact_orders_stg ... -- same cols and constraints

PARTITION BY RANGE (event_date) (

PARTITION p2025m11 VALUES LESS THAN (DATE '2025-12-01')

);

-- After loading the staging partition:

ALTER TABLE fact_orders

EXCHANGE PARTITION p2025m11

WITH TABLE fact_orders_stg

WITHOUT VALIDATION; -- assume integrity already enforced

Why: Fast, minimal redo; no blocking scans.

Pitfalls: Structures must match exactly (check constraints, indexes). Validate in lower envs first.

3) Parallel query & DML to compress batch windows

-- Enable parallelism for the session or statement

ALTER SESSION ENABLE PARALLEL DML;

INSERT /*+ PARALLEL(fact_orders, 8) */ INTO fact_orders

SELECT /*+ PARALLEL(src, 8) */ * FROM staging_src src;

COMMIT;

When: Large scans/loads, nightly aggregation, historical backfills.

Pitfalls: Parallel isn’t free—watch I/O, temp space, concurrency. Cap degrees per workload class.

4) External tables for zero-copy ingestion (CSV/Parquet)

Great for staging data without first landing inside Oracle segments.

CREATE TABLE ext_clicks (

user_id NUMBER,

ts TIMESTAMP,

url VARCHAR2(2000)

)

ORGANIZATION EXTERNAL (

TYPE ORACLE_LOADER

DEFAULT DIRECTORY ingest_dir

ACCESS PARAMETERS (

RECORDS DELIMITED BY NEWLINE

FIELDS TERMINATED BY ','

)

LOCATION ('clicks_2025_11.csv')

)

REJECT LIMIT UNLIMITED;

Why: Query files directly, validate, then CTAS/INSERT into partitioned tables.

Pitfalls: No indexes/stats on external data; plan for CTAS + stats gather post-load.

5) Materialized views (MVs) with FAST refresh

Summarize once, serve many.

CREATE MATERIALIZED VIEW mv_daily_sales

BUILD IMMEDIATE

REFRESH FAST ON COMMIT

ENABLE QUERY REWRITE AS

SELECT

TRUNC(event_date) AS day,

customer_id,

SUM(amount) AS sales

FROM fact_orders

GROUP BY TRUNC(event_date), customer_id;

When: Repeated aggregates, star schemas, BI dashboards.

Pitfalls: FAST refresh needs materialized view logs on base tables and precise determinism. If you can’t meet FAST rules, use periodic COMPLETE refresh.

6) Analytic functions for windowed metrics

SELECT

customer_id,

event_date,

amount,

SUM(amount) OVER (PARTITION BY customer_id

ORDER BY event_date

ROWS BETWEEN 6 PRECEDING AND CURRENT ROW) AS amt_7d

FROM fact_orders

WHERE event_date >= DATE '2025-10-01';

Why: Moving sums, ranks, gaps—done in SQL, not Python. Often faster and cheaper.

Pitfalls: Windows explode temp usage if not pruned; filter early and keep partitions narrow.

Feature comparison (quick cheat sheet)

| Use case | Oracle feature | What to remember |

|---|---|---|

| Time-series facts | Range partitioning | Automate future partitions; co-partition dims for partition-wise joins |

| Fast swaps | EXCHANGE PARTITION | Staging and target must match structure |

| Heavy ETL | Parallel DML/Query | Set sensible degrees; monitor temp/undo/IOPS |

| Reusable aggregates | Materialized views | Add MV logs; use FAST or scheduled COMPLETE |

| File landing zone | External tables / SQL*Loader / Data Pump | Validate first; CTAS + gather stats |

| Star joins | In-Memory Column Store (option) | Lightning-fast scans; watch memory footprint |

| Cold data | Compression/HCC | Great for archives; extra CPU to decompress |

End-to-end example: daily load with prune-friendly partitions

- Land new CSV to the external directory → validate counts.

CTASinto a same-shape staging table withNOLOGGINGandPARALLEL.- Gather stats on staging.

EXCHANGE PARTITIONinto the main table.- Refresh dependent MVs.

CREATE TABLE fact_orders_stg NOLOGGING PARALLEL 8 AS

SELECT /*+ PARALLEL(8) */

order_id, customer_id, amount, event_date

FROM ext_orders_2025_11;

BEGIN

DBMS_STATS.GATHER_TABLE_STATS(

ownname => 'DW',

tabname => 'FACT_ORDERS_STG',

method_opt => 'FOR ALL COLUMNS SIZE AUTO');

END;

/

ALTER TABLE fact_orders

EXCHANGE PARTITION p2025m11 WITH TABLE fact_orders_stg

WITHOUT VALIDATION;

EXEC DBMS_MVIEW.REFRESH('MV_DAILY_SALES','F'); -- FAST if eligible

Best practices (that save you pain)

- Stats as code: Schedule

DBMS_STATSwith column histograms where skew exists. Check plan stability after schema changes. - Degrees of parallelism (DOP): Fix caps per queue/class. Don’t let ad-hoc BI spike to 64 DOP and starve ETL.

- Prune or pay: Every large table should be partitioned or sub-partitioned on the primary access dimension (usually date).

- Design for EXCHANGE: Treat monthly partitions as immutable slices. All ingestion targets should be exchange-ready.

- Separate hot/cold: Hot data in In-Memory/OLTP-friendly storage; cold data compressed. Different tablespaces, different expectations.

- Observe reality, not beliefs: Capture ASH/AWR baselines, track plan hash changes, and compare DOP vs. elapsed time and I/O.

- Security & governance: Use roles/least privilege, Data Redaction/Virtual Private Database if needed. Don’t embed creds in jobs.

Common pitfalls

- Stale stats → “mystery” slowdowns.

- Missing local indexes after exchange. Rebuild or use global-prefixed strategies.

- One giant partition. Monthly/weekly works better for rolling ops than yearly.

- ON COMMIT MVs on heavy OLTP tables. Consider scheduled FAST/COMPLETE instead.

- Parallel everywhere. It’s a scalpel, not a default.

Internal link ideas (keep it official)

Link to Oracle’s official guides for: Partitioning, Parallel Execution, Data Pump & SQL*Loader, External Tables, Materialized Views, In-Memory Column Store, DBMS_STATS, AWR/ASH performance tuning.

Summary & call-to-action

Oracle is not “just OLTP.” With partitioning, parallelism, external tables, and materialized views, you can turn batch hours into minutes and keep dashboards snappy. Start by partitioning your biggest fact, automate stats, and redesign your load around EXCHANGE PARTITION. Then measure AWR before/after—let the numbers brag.

CTA: Want a hands-on checklist tailored to your schema? Say “Assess my Oracle pipeline,” paste table DDL + a sample AWR, and I’ll map your next four wins.

Image prompt

“A clean, modern diagram of an Oracle data warehouse: partitioned fact table, staging area with external tables, exchange partition workflow, parallel query arrows, and a materialized view layer — minimalistic, high contrast, isometric 3D.”

Tags

#OracleDatabase #DataEngineering #Partitioning #ParallelQuery #MaterializedViews #ETL #Analytics #PerformanceTuning #DatabaseDesign

Pitch ideas (next articles)

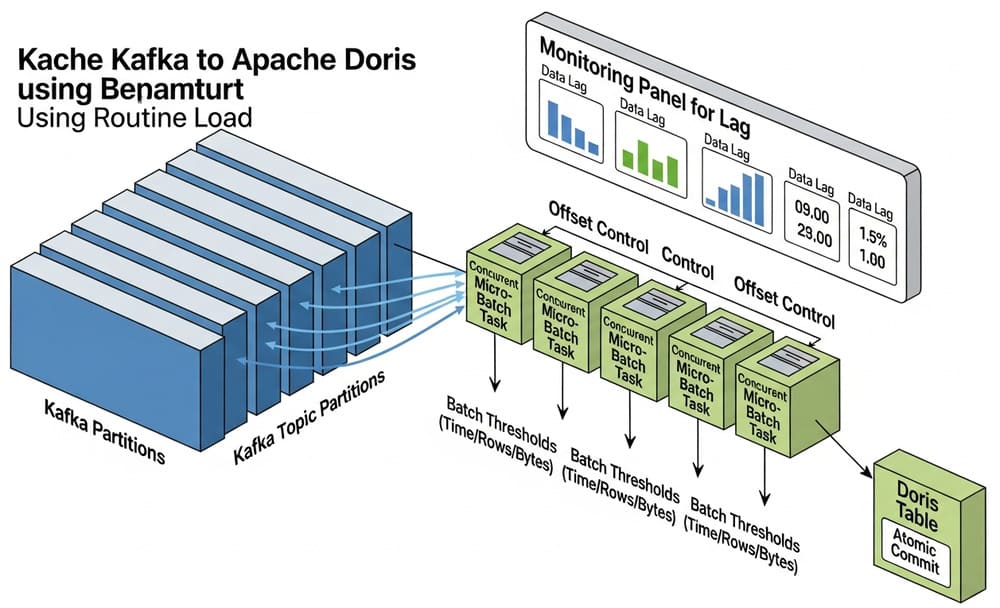

Hybrid Architectures: Oracle as system of record with Kafka/Spark/Snowflake for analytics offloading—practical patterns.

Oracle Partitioning Playbook: Range vs list vs hash; local vs global indexes; exchange/merge/split with timelines.

Parallel Execution Deep Dive: DOP math, skew handling, and monitoring PX servers like a pro.

Materialized Views in the Real World: Designing for FAST refresh and query rewrite, with failure modes.

From Files to Facts: External tables, SQL*Loader vs Data Pump, and CTAS pipelines with stats automation.

Optimizer Truth Serum: DBMS_XPLAN, bind peeking, adaptive plans, and keeping plan hash stable across releases.

In-Memory Column Store for BI: Star transformations, vectorized scans, and cost/benefit versus extra RAM.

Secure by Design: Virtual Private Database, Data Redaction, TDE, and role patterns for analytics teams.